Harmful websites stay in Google Search because content removal is constrained by law, platform policy, and technical‑indexing limits, which means not every complaint leads to de‑indexing. Reputation management is the structured control of how entities appear in search‑driven information systems; online reputation refers to how search visibility, review signals, and content‑ranking patterns shape user perception of credibility and risk.

What counts as a “harmful” website in search ecosystems?

A harmful website is any online property that spreads misinformation, harassment, invasion of privacy, or other content that damages an entity’s reputation, even if it remains technically compliant with search‑indexing rules. Within Google’s ecosystem, harmful refers to how content affects user safety, trust, and legal‑risk, not just whether it exists on the web. The system evaluates context, source‑type, and user‑experience to decide how severely to treat a page.

Dive Deeper With Our Expert Guides and Related Blog Posts:

What Makes a Website Legally Harmful Under UK Law and Eligible for Takedown

Why Indeed Reviews Are Hard to Remove and What the Platform Policy Actually Covers

In search‑ecosystem terms, harmful content is defined by its potential to alter entity perception through false, misleading, or abusive information. The system distinguishes between pages that contain inaccuracy, harassment, defamation, or data‑leakage, and those that are merely negative or opinionated. The distinction shapes how Google responds to user reports and legal objections.

The mechanism operates through a layered‑evaluation stack. First, Google’s crawlers index the page as part of the general web. Second, the system applies quality, security, and policy‑signals, which can demote, flag, or label content. Third, legal‑removal‑requests or policy‑challenges are evaluated against the host‑country’s rules. The result is that some harmful pages disappear, while others remain in the SERP with modified prominence.

How does Google decide when to remove or de‑index a website?

Google decides to remove or de‑index a website when the content clearly breaches its own policies, or when a court‑enforceable legal order demands it, not based on general reputation‑dislike. The system uses a combination of automated‑filters, policy‑categories, and legal‑compliance checks to determine which URLs are eligible for removal. The process is designed to protect free‑expression while limiting clearly unsafe or illegal content.

Within the ecosystem, removal refers to the removal of specific URLs from the SERP rather than the destruction of the underlying website. The mechanism works through crawling, indexing, and evaluation workflows. When a harmful page is reported, Google’s systems check it against policy‑rules such as hate‑speech, illegal content, and explicit data‑leakage. If the page clearly violates a defined category, Google may suppress or de‑index it.

The impact on search visibility depends on the nature of the violation. A clearly illegal or abusive page may be removed from the SERP and flagged in search‑results. A borderline‑harmful page may be demoted or left visible but unlabelled. The effect on entity perception can be substantial because the page’s presence or absence shapes how users judge the organisation’s trustworthiness.

Why do some harmful pages remain after a takedown request is filed?

Harmful pages remain after a takedown request because many complaints fall outside the narrow legal‑or‑policy‑criteria that search engines and hosting platforms require. The system only acts on clearly defined violations, not subjective‑dispute over facts or reputation. A request that does not meet those thresholds will not trigger de‑indexing, even if the page is damaging.

Within the ecosystem, this limitation arises from the balance between free‑expression, legal‑risk, and platform‑liability. Search engines and host‑providers cannot adjudicate every factual disagreement or harm‑claim. They must rely on codified rules, such as GDPR data‑leakage, defamation‑laws, or copyright‑infringement, which only apply in specific cases. When the content does not meet those criteria, the system retains it in the index.

The mechanism operates through a filter‑chain. The request is first checked against policy‑categories. If the content falls into one, the system may act. If it does not, the page stays indexed. The impact on search visibility is that the harmful page may remain visible in the SERP, altering the user’s perception of the entity. The result is that removal‑requests are not universal‑solutions but tools that apply only in defined conditions.

How do legal frameworks like GDPR and defamation law shape search visibility?

Legal frameworks such as GDPR, copyright, and defamation law shape search visibility by defining the conditions under which content can be removed, demoted, or retained in search results. These rules set the boundaries that search engines must follow when they process takedown‑requests, balancing protection of rights with expression and information access. The system evaluates each case within this framework before deciding on indexing status.

GDPR primarily governs the handling of personal data and the right to be forgotten. Within the ecosystem, this means that certain pages containing personal data may be removed if they meet the criteria, such as being inaccurate, outdated, or irrelevant. The system applies these rules across the web, which can alter the SERP‑profile of an entity with data‑leak risks.

Defamation law sets the standard for false statements that harm reputation. The system uses these rules when evaluating legal‑takedown‑requests, but only when the claim is supported by a court‑order or clear evidence. The mechanism works through jurisdiction‑specific rules, which vary by country and by platform. The result is that search engines can only remove pages that clearly breach local‑law standards, and not those that are merely negative or opinionated.

How do search algorithms interpret credibility and trust around harmful content?

Search algorithms interpret credibility and trust around harmful content by evaluating source‑authority, linking‑patterns, user‑engagement, and the presence of safety‑or‑policy‑signals. The system treats a website’s reputation as a composite of technical, human‑behaviour, and policy‑signals. Harmful content may still appear in the SERP if the host‑site has strong authority signals, even if the page is flagged.

Within the ecosystem, trust refers to how likely the system considers the content to be reliable, while credibility refers to the confidence it assigns to the information. The mechanism evaluates metrics such as domain‑age, secure‑connection, backlink‑quality, and user‑click‑patterns. A page with low‑trust signals but high‑authority‑links may still rank despite being harmful.

The impact on perception is that a harmful page can influence the SERP even when it is flagged. The system may retain it but add safety‑warnings or labels. The user still sees the page, but the perception is shaped by the presence of these signals. The result is that harmful content can coexist with trust‑signals, which complicates the relationship between removal and perception.

How do content‑removal rules interact with review and comment ecosystems?

Content‑removal rules apply to review and comment ecosystems, but they are constrained by the fact that user‑generated content is treated as protected expression unless it clearly breaches policy or law. The system balances the right to free‑expression with the risk of harmful or false reviews. The result is that many negative or borderline‑harmful reviews remain visible in the SERP, even after reports.

Within the ecosystem, reviews and comments are treated as a different category than standard web content. The system applies forum‑and‑platform‑specific rules, which may allow some abusive or false content if it is not clearly illegal. The mechanism operates through a mix of moderation, user‑reporting, and policy‑enforcement, which is not always sufficient to remove every harmful item.

The impact on search visibility is that the review‑cluster can remain in the SERP, influencing the entity’s perception. The system may demote the most egregious cases, but the overall effect is that the harmful‑review ecosystem remains partly intact. The result is that reputation‑management must focus on both content‑removal and content‑enhancement strategies.

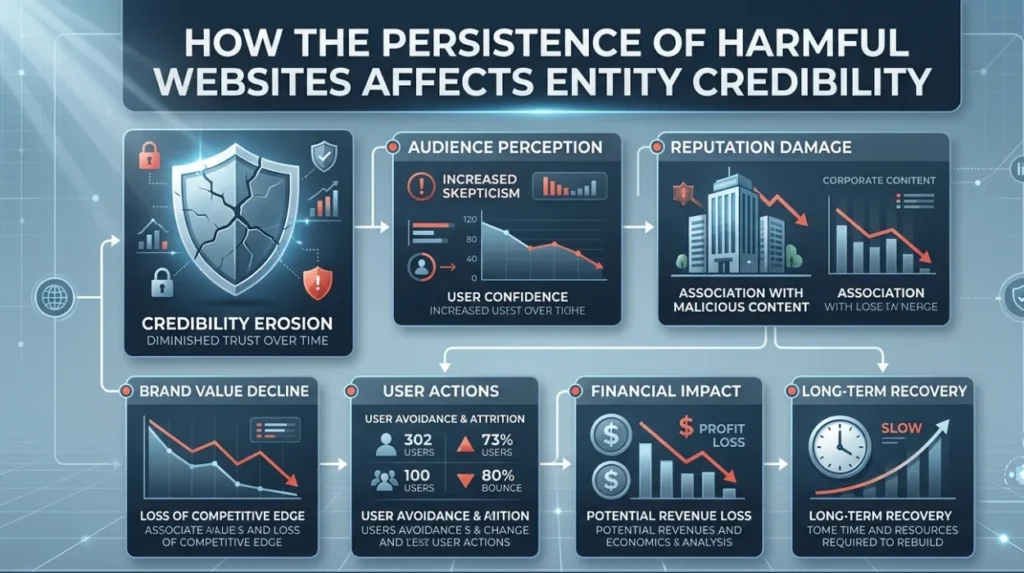

How does the persistence of harmful websites affect entity credibility?

The persistence of harmful websites affects entity credibility by creating a visible gap between the SERP‑representation and the actual‑quality or integrity of the organisation. The system treats the SERP as a proxy‑for‑trust, which means that harmful pages can alter the user’s perception even if the content is disputed. The effect is cumulative and self‑sustaining because of the way search engines weight visibility.

Within the ecosystem, credibility refers to the confidence users place in the entity’s reliability and honesty. The mechanism operates through a combination of ranking‑dynamics, user‑behaviour, and authority‑signals. Harmful websites distort the credibility‑signal by introducing false or misleading information. The result is that the entity’s digital‑footprint becomes more fragmented and less reliable.

The impact on entity‑perception is that the harmful content dominates the SERP‑surface, even if the organisation has many positive‑signals. The system’s reliance on visibility creates a bias toward the most prominent pages. The result is that the persistence of harmful websites can damage the entity’s perceived‑trustworthiness, even when the damage is not justified by the underlying reality.

How do content‑indexing constraints contribute to the problem?

Content‑indexing constraints contribute to the problem because search engines cannot monitor every change to every page in real‑time, which means harmful content can remain in the SERP even after it is removed from the source. The system relies on periodic‑crawling and user‑reports to detect and update its index. The lag between removal and de‑indexing creates a gap where harmful content stays visible.

Within the ecosystem, indexing refers to the process of storing and organising web‑pages for search. The mechanism operates through a distributed‑crawling‑network that cannot inspect every page constantly. The result is that the system may retain outdated or harmful content in the SERP even after the original page is deleted. The effect on perception is that the harmful narrative persists despite the removal.

Harmful websites stay in Google Search because content‑removal is constrained by law, platform‑policy, and technical‑indexing limits. The system must balance free‑expression, user‑safety, and platform‑liability, which means that not every harmful page is de‑indexed, even after a formal takedown request. The result is that the SERP‑representation of an entity can be distorted by visible harmful content, even when the underlying reality is different. The gap between visible information and actual‑quality must be managed through a combination of legal‑action, policy‑challenges, and content‑enhancement strategies. The system’s structure and constraints shape the relationship between removal, visibility, and perception, which is central to the understanding of online‑reputation.

FAQs

Why do harmful websites stay in Google even after reporting them?

Harmful websites often stay in Google Search because takedown requests must meet strict legal or policy criteria, such as clear defamation, data‑protection breaches, or explicit abuse, which many cases do not satisfy. Platforms like Google cannot remove content merely because an entity objects to its reputation impact, so the website may remain indexed but potentially demoted or flagged.

Can website removal services fully delete a harmful page from the internet?

Website removal services can sometimes get harmful pages de‑indexed from Google or removed from the original host, but they cannot guarantee complete eradication if the content is mirrored or republished on other sites. De‑indexing, takedowns, and reputation‑management strategies work together to reduce visibility rather than create total deletion.

What types of content actually qualify for Google removal requests?

Google typically removes pages that clearly breach its policies, including illegal material, exploitative or hateful content, severe privacy violations, and web‑forgery, plus some cases under GDPR “right to be forgotten” or defamation‑court orders. Content that is simply negative, opinionated, or borderline‑harmful but not clearly illegal or policy‑violating often remains indexed.

How long does it take for Google to act on a website removal request?

Google’s response time varies, but policy‑based takedown requests often receive an initial review within days, while legal‑based removals tied to GDPR or court orders can take longer, depending on verification and jurisdiction. Even after approval, full de‑indexing may lag due to crawling and caching cycles, which is why the harmful page can still appear temporarily.

What can businesses do when harmful sites stay in search results?

Businesses can focus on reputation‑signals such as authoritative content, positive reviews, and verified profiles to dilute the impact of harmful websites in the SERP. Paired with targeted removal requests where possible, this approach helps control search visibility and reduces the perceived weight of the damaging pages over time.