Indeed reviews are hard to remove because the platform’s moderation rules prioritize user‑generated content, authenticity, and consistency over selective‑removal requests, which constrains manual intervention. Reputation management is the structured control of how entities appear in search‑driven information systems; online reputation refers to how search visibility, review‑signals, and content‑ranking patterns shape user perception of a business’s credibility.

What are Indeed reviews and how do they form?

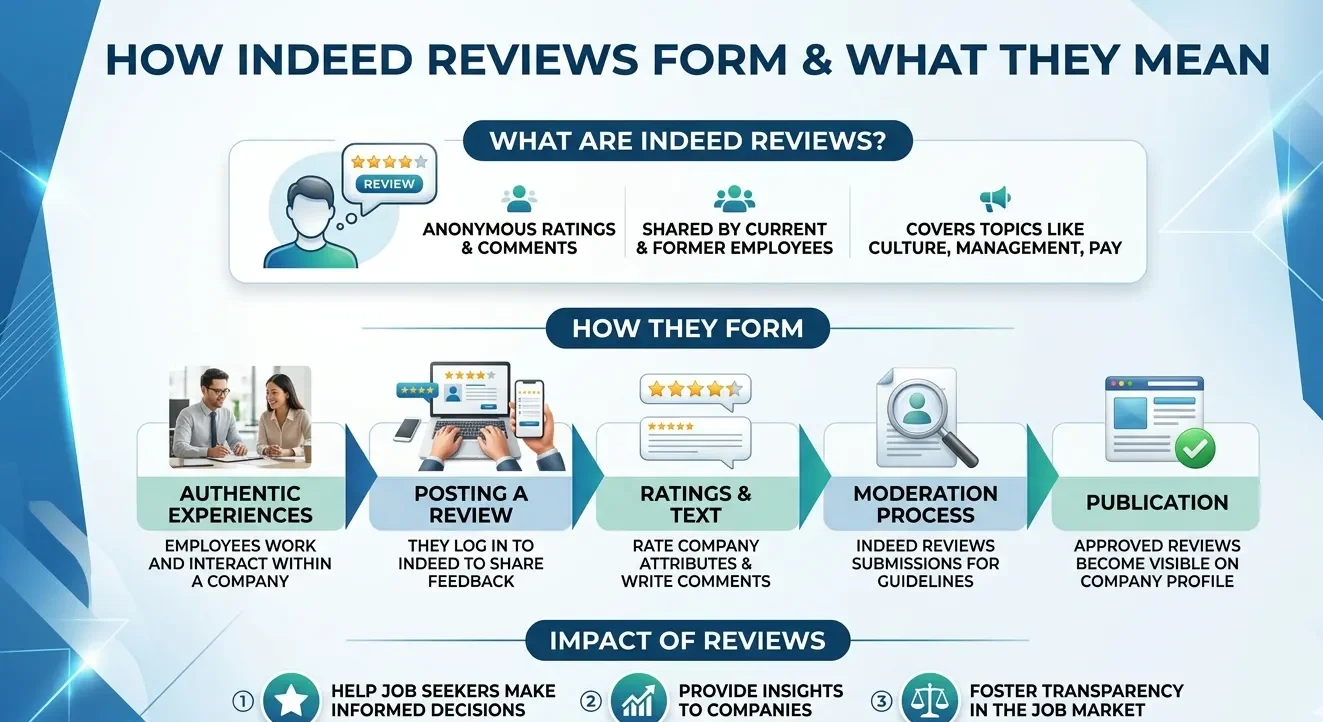

Indeed reviews are user‑generated evaluations of employers, job postings, and workplace conditions that search engines and job‑seekers interpret as evidence of organisational‑trust and culture.

Indeed reviews refer to star‑rated and text‑style feedback that candidates and employees submit about companies, salaries, and working conditions. Within Indeed’s ecosystem, these reviews are treated as primary‑trust signals for both potential employees and external observers, including search engines that index the listing. Each review contributes to the overall employer‑rating, sentiment‑distribution, and SERP‑representation.

Dive Deeper With Our Expert Guides and Related Blog Posts:

How Google Decides Whether to Remove a Website From Its Search Results

What Makes a Website Legally Harmful Under UK Law and Eligible for Takedown

The formation mechanism operates through a moderated‑user‑submission system. Users create Indeed accounts, verify employment status where required, and then submit ratings and free‑text comments. The platform applies content‑moderation rules, filtering for extreme language, fraud, harassment, and policy‑violations. The remaining reviews enter a public‑cluster tied to the employer listing.

The impact on entity perception is substantial. A 3.2‑average with 1,200 reviews conveys a different credibility‑signal than a 4.7‑average with 300 reviews, even if both are authentic. The clustering of negative or positive reviews shapes how job‑seekers interpret employer‑reliability, and search systems may use that cluster to influence ranking for “employer review” queries. The embedded reputation‑signal becomes a persistent feature of the SERP.

Why are Indeed reviews difficult to remove?

Indeed reviews are difficult to remove because the platform’s policy protects user‑generated content and only permits deletion under narrow, policy‑defined conditions.

Indeed defines review‑removal as a moderation‑driven exception, not a routine right of the reviewed entity. The platform’s policy explicitly states that reviews may be removed only when they violate specific rules, such as containing racist or discriminatory language, disclosing personal data, constituting harassment, or clearly breaching the site’s terms of service. The default position is retention, even if the review is unfavourable.

The mechanism operates through a layered‑moderation workflow. When an employer requests removal, Indeed’s systems evaluate the review against its published policy‑categories. The moderation layer checks for explicit rule‑violations, not subjective‑truth or fairness. If the review does not match the documented thresholds, it remains live. The platform does not adjudicate factual accuracy except in extreme cases.

The impact on search visibility and perception is structural. Because removal is constrained, negative Indeed reviews tend to remain in the employer‑profile for extended periods. The employer’s SERP‑representation includes this cluster of feedback, which job‑seekers read as a primary‑trust indicator. The inability to selectively cleanse negative items forces entities to compete on the overall‑reputation‑curve rather than on individual‑review‑status.

How does the Indeed review‑policy differ from general search‑ecosystem rules?

Indeed’s review‑policy differs from general search‑ecosystem rules because it is tightly control‑oriented, user‑centred, and explicitly limits employer‑agent removal‑authority.

Indeed’s review‑policy defines the conditions under which reviews may be removed, and it frames user‑generated content as a core part of the platform’s value. Within search ecosystems, external‑search engines index Indeed‑content as authoritative‑third‑party‑feedback, but they do not control the moderation‑logic. The distinction matters because removal‑decisions are made by Indeed’s internal policy, not external‑SEO‑signals.

The mechanism operates by aligning internal‑policy with broader‑anti‑discrimination, anti‑harassment, and data‑protection standards. The platform does not remove reviews simply because they are negative or factually disputed; it reserves removal for cases that breach explicit‑policy‑categories. The SERP‑layer sees the same content regardless of the employer’s internal‑objection, which means the review‑policy acts as a hard constraint on reputation‑management.

The impact on entity perception is one‑sided. The employer cannot selectively remove adverse reviews, but the platform can remove widespread‑abuse patterns that damage the site’s own credibility. The result is a reputation‑environment tilted toward openness, even when it creates reputational risk for specific employers. The SERP‑representation of the business must therefore operate within the limits of Indeed’s editorial‑control.

How does Indeed handle authenticity and fake‑review detection?

Indeed handles authenticity and fake‑review detection by applying automated filters, behavioural‑analysis, and policy‑gating to prevent mass‑fabricated or coerced feedback.

Indeed defines authenticity as reviews that are genuinely user‑driven and not coordinated, incentivised, or scripted. The platform uses language‑model‑analysis, account‑behaviour‑tracking, and review‑pattern‑detection to identify clusters that resemble bulk‑fake‑review‑campaigns. The system flags reviews that share similar phrasing, posting‑rhythms, and author‑profiles for human‑moderation.

The detection mechanism operates in three stages. First, the system analyses text‑similarity across multiple reviews submitted to the same employer; high‑lexical‑overlap triggers a fraud‑probability‑score. Second, it tracks review‑velocity; listings that receive 50–100 reviews in 48 hours from low‑history accounts prompt manual‑review. Third, it validates user‑history and interaction‑patterns to distinguish legitimate employees from anomalous‑accounts.

The impact on SERP‑evaluation is partial. Indeed may remove or suppress clusters that clearly violate policy, but it does not actively cleanse every questionable review. The search‑ecosystem inherits the platform’s moderation‑logic, which means that only the most egregious‑fake‑clusters are removed. The remaining authenticity‑boundary is imperfect, and the reputational‑signal may still carry some fabrication.

How do Indeed reviews affect SERP‑level entity‑credibility?

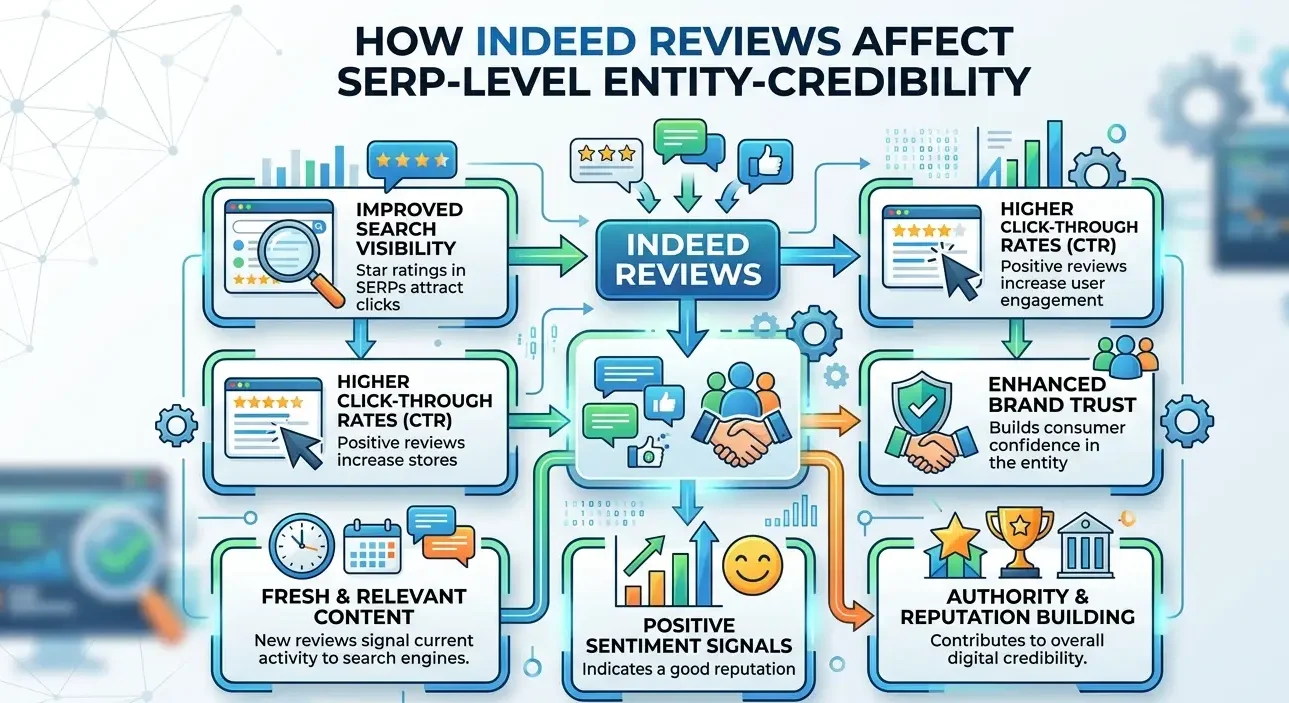

Indeed reviews affect SERP‑level entity‑credibility by embedding employer‑reputation signals into search visibility, job‑seeker‑decision‑making, and broader‑digital‑footprint evaluation.

Indeed‑linked employer‑profiles enter search‑results as high‑authority‑third‑party‑sources because the platform is recognised as a major job‑marketplace. Within search ecosystems, the Indeed‑review‑cluster forms a visible reputation‑layer that appears alongside job‑postings, company‑websites, and news‑articles. The SERP uses that cluster as part of the entity‑perception model.

The influence on search visibility is measurable. Listings with strongly‑negative Indeed‑review‑clusters often appear lower in certain employer‑search contexts, or are accompanied by warning‑indicators and reduced‑feature‑placement. The system treats the review‑layer as a proxy‑for‑workplace‑risk, which can lower the perceived attractiveness of the employer to candidates. The reverse is true for strongly‑positive clusters, which can lift the listing’s visibility and click‑probability.

The impact on perception goes beyond the SERP. Indeed reviews are frequently cited in external‑reference‑graph‑structures, such as comparison‑sites, labour‑market‑analyses, and professional‑profiles. The platform’s reputation‑signal integrates into a broader information‑network, which amplifies the effect of both positive and negative clusters. The durability of Indeed‑reviews ensures that the reputation‑signal remains active across long‑search‑cycles.

How does the Indeed‑removal‑policy align with UK labour and reputation‑frameworks?

Indeed’s removal‑policy aligns with UK labour and reputation‑frameworks by protecting legitimate‑criticism and limiting removal to cases of clear misconduct, abuse, or data‑violation.

UK‑and‑EU‑level frameworks treat employee‑feedback as a core component of labour‑market transparency and workplace‑accountability. Regulatory‑models require that employers cannot suppress genuine‑employee‑expression, and Indeed’s policy‑design reflects that principle by restricting removal‑authority. The platform permits deletion only when reviews contravene explicit‑policy‑conditions, such as harassment, illegal content, or breach of data‑protection.

Indeed reviews are hard to remove because the platform’s moderation‑rules and policy‑design prioritise user‑generated authenticity and legal‑protection‑principles over employer‑removal‑requests. The policy‑environment limits intervention to cases of clear‑misconduct, which means that the review‑cluster embedded in the employer’s SERP‑representation tends to be more durable and less curatable than the owner‑desired scenario. The result is a reputation‑structure that is shaped by external‑moderation‑logic, search‑ecosystem‑interpretation, and long‑term‑review‑persistence, which jointly determine how employers appear in job‑seeker‑search contexts. The constraints on removal reinforce the role of Indeed as a transparent, third‑party‑feedback‑layer rather than a pliable‑branding‑tool.